Improve Your HR Programs through Experiements

Always be testing.

Hi, Friends!

It’s been a week in Portugal, and I have been having a blast. We went through the Azores, where we climbed all of the vulcanos. Then, I saw the magical sunset over Porto. Now, on a train on the way to Lisboa.

This is the final week here.

But this post is not about food and wine (maybe it should be).

It is about People Analytics and experimentation.

We tasted dozens of wines over the last several days, including Vinhos Verde and the well-known Port wines. This is all testing and experimentation to find what works for our palettes and tastes.

But you can apply the same ideas of experimentation to HR.

Let’s take a look at an example:

The Problem

First things first, let’s set a context.

Once upon a time, I was working with a client going through rapid growth. They have built a great product and a professional services organization to support it. But…

The client was not satisfied with the way they measured performance. They didn't know how well the engineers were building the product. They also did not know which leaders made an outsized impact on the company.

How did I know?

Well, they showed me a 9-box where 70% of people were in the top right box.

Sounds familiar? Happens all the time.

Sometimes, oversimplifying things does not give you the proper resolution you seek, lumping everyone into the same performance level.

Here is where experimentation started.

Hypothesis 1: The managers are too nice

The first hypothesis was that the managers were WAAAYYYY too nice.

They were promoted rapidly to new levels and sometimes managed people who were their peers just months before. They often did not have proper management training to talk about performance. And they wanted to make sure their direct reports were happy.

So, they gave high ratings and high fives!

To address this, the COO I worked with wanted to enforce a new system forcing everyone to rank their talent from the best to the lowest against my advice.

We tested this idea with a forced distribution in the new performance review.

The resistance was strong. And the sentiment was negative.

The technique introduced dissatisfaction, fear, and mistrust of the company’s management. But it also produced the right distribution of talent for the company and started maturing the managers.

What the COO learned from this initiative:

Forced ranking does not work:

It disempowers managers

It breaks down trust in management

It creates a negative sentiment

Forced ranking works:

It allows the creation of a more proper distribution of performance

It helps mature the managers to start thinking about the talent properly

But something about forced ranking did not feel right. So, we proceeded to test a new hypothesis.

Hypothesis 2: 9-box needs to improve

The first hypothesis suggested that using a forced distribution allows for a better resolution of talent. Likely, this was because the scale was larger than simple 3-points.

However, it also generated much dissatisfaction among the talent and managers.

So, the next step was to iterate on the process and improve the distribution of the 9-box.

This means creating a broader scale for performance and potential.

Side note…

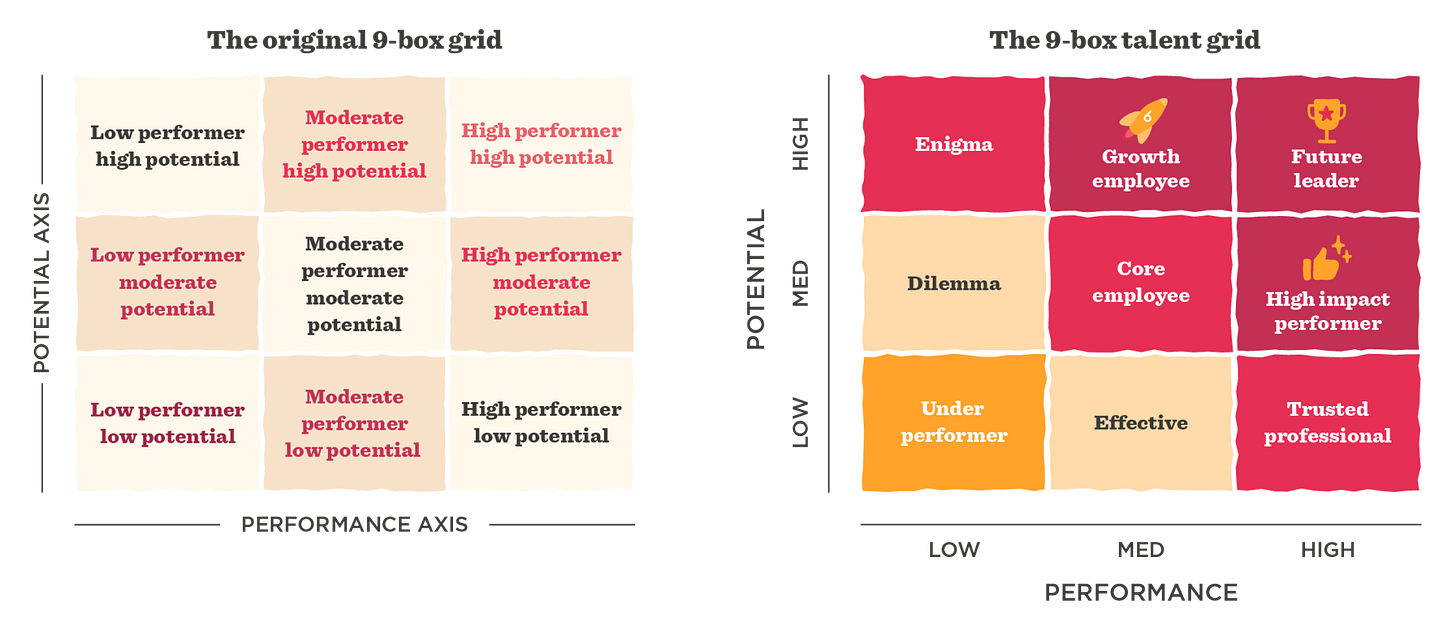

For those of you who don’t know, the 9-box is a tool HR uses to evaluate each individual's performance (low, medium, high) and potential (low, medium, high) to identify the areas of improvement and growth.

Here is one from CultureAmp:

Anyway, stepping away from cheesy names above (ahem, “Trusted Professional”).

You can see how easy it is to say someone is a high performer or a future leader. And how difficult it is to say that anyone is an underperformed, dilemma, or an enigma.

Solution:

Expand the performance into a 5-point scale and the potential into a 4-point scale to allow top talent to emerge from the Future leader box and differentiate low performers from people just developing or who started at the company recently.

Okay, the next cycle comes through, and we end up with a better distribution but still some dissatisfaction about the process and the definitions of the boxes.

Learnings:

Broader distribution allows you to find top and bottom talent

It generates some dissatisfaction with the process

But, this negativity primarily comes from misunderstanding the scale, not from using it to rank talent itself

Hypothesis 3: Manager training is the final missing piece

Okay, so we built out a new classification system to identify the top talent. It worked, but it created some confusion about how to use it effectively.

So, the next step was to test if the training should alleviate the issues.

This meant improving the training provided, building out the performance and compensation guides, drafting FAQs, and testing the training with champions in different departments. Then training managers of course and also training the employees.

This process took months. But once done and ready for the new cycle, it produced better results:

Managers became used to the scale

They were able to communicate it to employees effectively

They established a new level of maturity for the organization

And employees finally understood what they did well and how they needed to improve

Key Takeaways

You need to test your solutions multiple times before they work well.

Sometimes, you must follow the leadership's will to find the data supporting your case. Other times, you will introduce a less-than-optimal solution that needs improvement.

The example above is only an illustration; there were more than 3 hypotheses to test and more components to optimize. But the moral is the same:

Always be testing.

See you next week!

K

Learn People Analytics in a Practical Way!

Check out my new Practical People Analytics Course that covers the most common questions I get from HR professionals:

What metrics should I use?

How do I measure engagement?

How do I make sure there is no bias in my comp?

What is the best way to measure performance?

How can I use advanced analytics to drive action?

Which means… you will have everything you need to build your data-driven HR function.